Global CO2 emissions from wildfires could be c25% as large as anthropogenic CO2 emissions, while burnt areas in the US reached an joint all-time record in 2020. Hence this note reconsiders some nature-based solutions to climate change. Hands-off forest conservation may do more harm than good in fire-prone areas. Sustainable forestry, carbon-negative materials, biochar and biomass energy also look more favorable.

Forest conservation: the advantages?

I took the photo below on New Years Day from the top of a mountain in Vermont, looking East over the forest, which stretches all the way to New Hampshire. If you had asked me then, I would have insisted that forest conservation was a crucial component of the world’s roadmap to Net Zero; and a virtuous source of generating carbon credits, albeit slightly less virtuous than incremental reforestation.

Forests store 200-300 tons of carbon per hectare. Moreover, deforestation contributes 6.5GTpa of emissions, as 10M hectares of the world’s 3.5bn hectares of forests are torn down every year. This is the singles largest anthropogenic emissions source globally (data below).

Viewed from this perspective, stemming the wanton destruction of nature seems like an important climate objective. We have even gone so far as to argue the US could end up placing sanctions on Brazil by the end of 2021, for Bolsonaro’s recent renewed assault on the Amazon.

Forest conservation: the disadvantages?

But there is another side of the coin. This has become more apparent for me, after spending 3-months in Oregon, Utah, Nebraska, California, Colorado, South Dakota and Montana amidst a stint of nomadic working this year. You see a lot of landscapes like this. They are beautiful (especially with a smiling dog in the foreground). But they are bone dry.

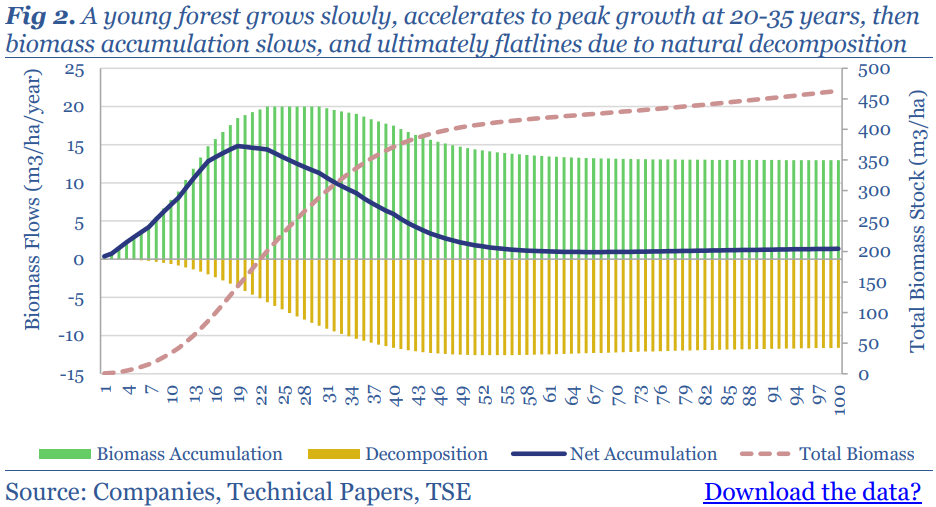

Mature forests also stop sequestering CO2 at some point between 50-200 years of age. First the rate of tree growth slows down by 50% (chart below). Moreover, the rate of CO2 release from the decomposition of dead matter eventually catches up to the rate of CO2 fixation via photosynthesis. And other dead matter accumulates on the forest floor and dries out…

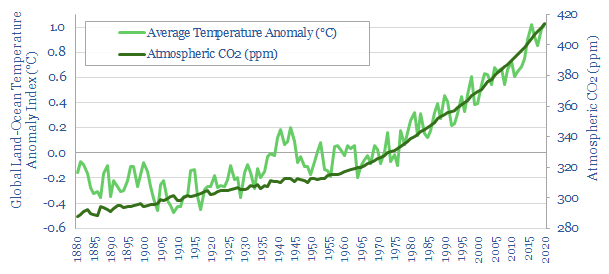

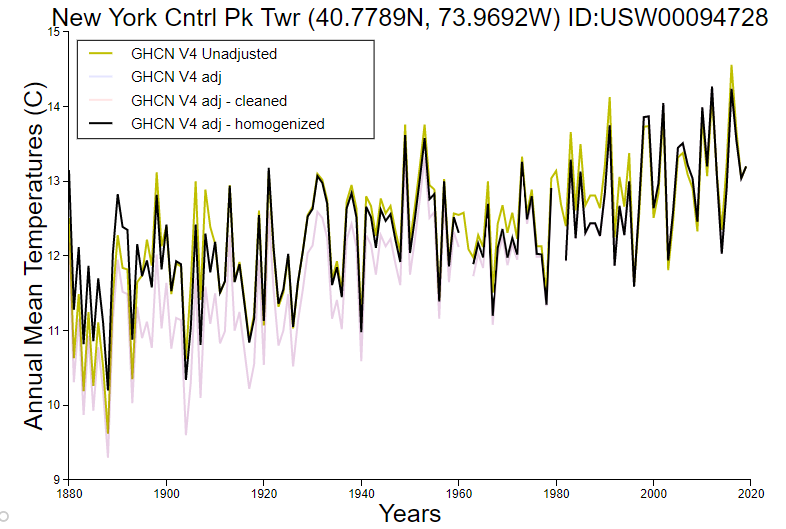

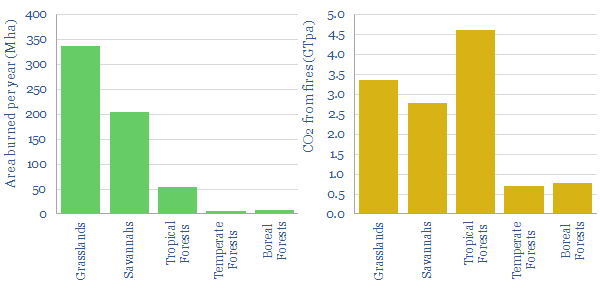

This amplifies the risk of forest fires in older forests. One estimate in the technical literature is that 600M hectares of the Earth burns each year, emitting 12GTpa of CO2e. For perspective, this equates to 0.3% of the world’s forests burning every year, and the total toll of wildfires may be as large as 25% of global anthropogenic CO2.

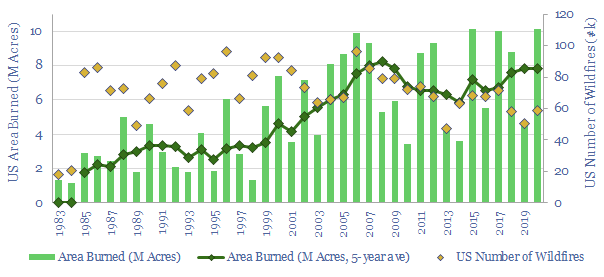

In the United States, 2020 saw 10.1M of acres burning in wildfires, which is the joint-highest of any year on record (chart below). Interestingly, the prevalence of wildfires has actually fallen by 20%. But the average fire is around 3x larger, when fire does break out.

The single largest cause, cited in technical papers that we reviewed, was the accumulation of biomass in unmanaged forests. US forest cover has grown for 70 continuous years. And a second cited cause is climate change.

Seen from this lens, forest conservation policies may need to be re-thought. Is it possible, especially in dry geographies, that forest conservation simply encourages the accumulation of biomass that will later lead to life-threatening conflagrations and carbon releases?

Wildfires in theory?

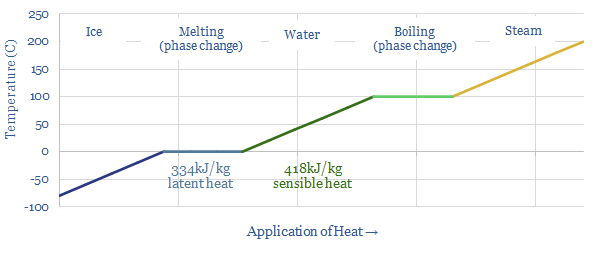

Three elements are needed for a fire to occur: heat, fuel and oxygen. There is little chance of controlling heat or oxygen in the environment. Hence the only practical option to prevent wildfires is to remove fuel. Note this is totally at odds with the strictest forest conservation practices, which restrict any removal of biomass from a natural ecosystem.

There are also different types of fire, but crown fires are most expansive and most devastating. Specifically, ground fires consume mostly duff layers, produce few visible flames and can even go undetected, smoldering for days or weeks. Surface fires produce small flaming fronts that consume needles, moss, lichen and vegetation. They can kill up to 75% of trees, but can also be fought by ground crews. Full blown crown fires become active when there is enough heat and a “ladder” for the fire to climb up into tree canopies. They can kill 100% of trees and also burn off 10-60% of the carbon in soils beneath forests.

Fire kills trees by killing the cambium layer of living cells inside the tree bark that produces new wood and bark. Foliage is also scorched, buds are killed off and roots are damaged. Thus what remains is a charred stock of dead biomass (as pictured from the car window below, East of the Cascade mountains, in Oregon). It can take 125-years for a forest to “recover” the carbon stocks burned off in such a conflagration.

Finally, a “Crowning index” is a metric used to quantify fire proneness. It is defined as the minimum wind speed that is necessary to sustain a crown fire in the canopy layer. 25mph translates into high hazard, 25-50mph is moderate and >50mph is low hazard.

How are wildfires prevented?

The only practical way to prevent wildfires is to remove surface fuels. But this can take many different approaches.

One common approach is controlled burning or “underburning” of specific areas, or the gathering and burning off of “slash”. The idea is to remove biomass (fuel). (I witnessed a controlled burn project last week in Oregon, in April-2021, where a dry summer is anticipated). It is justified as a form of ‘carbon insurance’, giving up the carbon in the burned off material, in order to safeguard the remainder. But clearly, burning off forest carbon is not the best option from a CO2 removals perspective.

Thinning is another approach that fells small and vulnerable trees, while leaving behind larger trees with thicker bark (that insulates the cambium). A related approach, thinning from below, removes trees of intermediate height, which could enable a fire to jump from the ground level to the canopy layer. Lower limbs below 10-12′ may also be pruned for similar reasons. Again, this biomass may be burned in a controlled fire, or piled up to decompose. Again, from a CO2 perspective, there should be better options.

Mastication is a mechanical technique for fuels reduction, chopping, mowing and mulching ladder fuels such as brush and smaller trees. The resultant wood chips form a compact layer of material, which can be distributed evenly around a site and may make the area more resistant to fire. An advantage is that the carbon is not burned off. But it does decompose over time. Another drawback is the potential to wound trees if the operator is not skilled. The duration of fires in masticated fuels may also be higher than other fires.

Species selection may be a practical way to improve fire resistance. For example, Ponderosa pine is noted as a good fire species as it has an open crown, high moisture content in the foliage, and thick bud scales that help it survive fire. Some hardwoods, such as bigleaf maple, red alder, Oregon white oak, have high moisture content, less volatile oils in their foliage and as a result, they burn at low intensities, if they do catch alight.

Digging a fire line down to mineral soil deprives the fire of fuel and will stop its progress. This requires harvesting all of the fuel from a particular area. It can integrate well with sustainable forestry, if a line is cleared through a large forest stand each year, during harvesting, forming a natural break for fires (example below).

Comprehensive forest management is likely to be most effective, based on the studies we reviewed. This encompasses the systematic removal of biomass until tree coverage is only 40-50ft2 per acre, primarily comprising the largest and most fire-resistant trees. One study showed that this strategy increased the crowning index of a high-hazard lands from around 25mph to 82% mph, moving 90% of all treated acres into low-hazard conditions, while 73% of the land would still be classed as low-hazard 30-years afterwards. This was substantially more effective than thinning or fifty percent biomass removals. It also generated a profit of $675/acre, while the other methods cost -$300-700/acre. The disadvantage is that you have lessening the risk of forest fires by lessening the extent of the forest. So this option may need to be reserved for select areas.

What conclusions for forest conservation carbon credits?

Our conclusion from evaluating wildfires is that forest conservation projects have very debatable carbon credentials, especially in fire-prone areas, and as the Earth warms. This adds to our prior fear that they are the least “incremental” for of nature based solution.

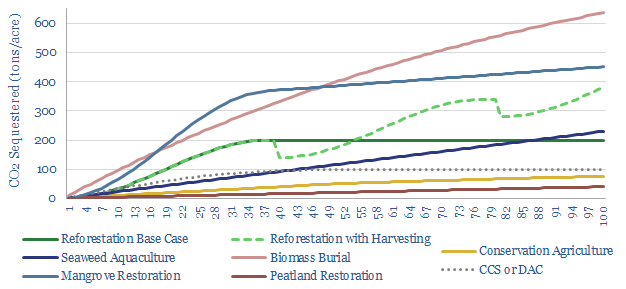

Active forestry, with periodic harvesting and re-planting can sequestering around 2x more CO2 over a century than a simple approach of restoring a forest and walking away.

This realization is also what we are seeing, when we assess the nature-based carbon offsets being undertaken by 35 large corporations. Reforestation projects are now 3x more prevalent than forest conservation projects in 2018-20.

We argue corporations will increasingly establish new internal groups to vet and procure the most reliable and cost-effective carbon removals. This will likely de-prioritize forest conservation, and instead prioritize reforestation, and a variety of active forestry initiatives.

Active forestry as an effective tool for climate mitigation?

“Active and responsible forest management is more effective in capturing and storing atmospheric carbon than a policy of hands off management”, according to one technical paper that we reviewed. Hence what are the best options that align with our research?

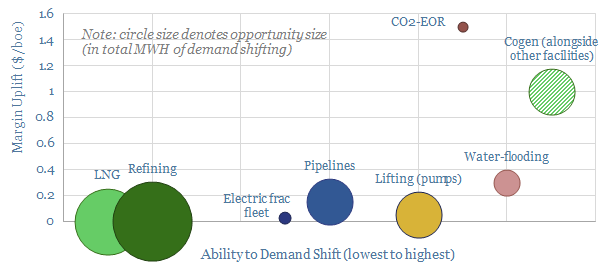

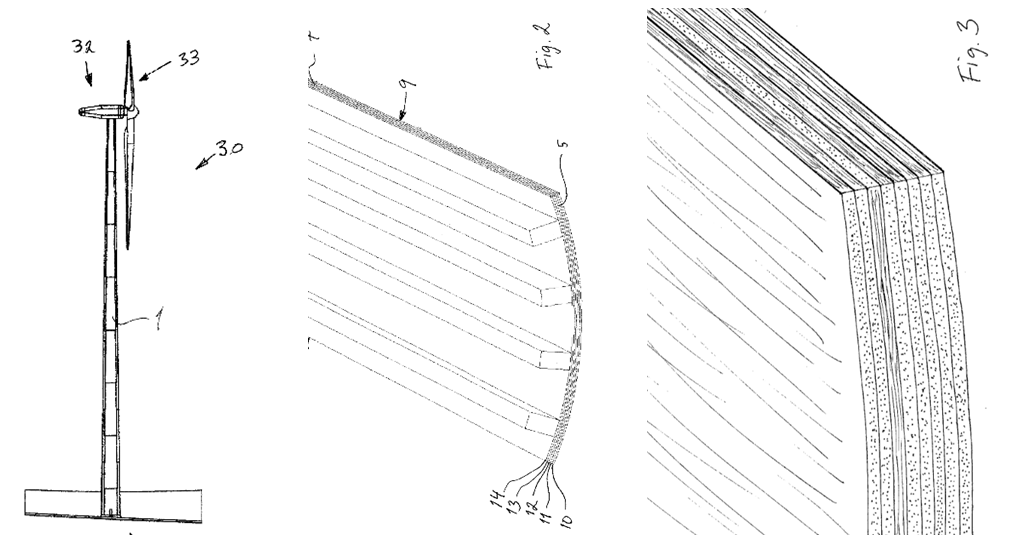

Comprehensive biomass removals from select, high-risk forest areas is likely to be the most effective fire-prevention method of the options reviewed above. An advantage is that larger trees can be used to make carbon-negative construction materials, such as cross-laminated timber, for use in buildings, or even in novel applications such as wind turbines. Our note below reviews the opportunity, which is among the most favored in our research, and even more so after this review of mitigating forest fire risks.

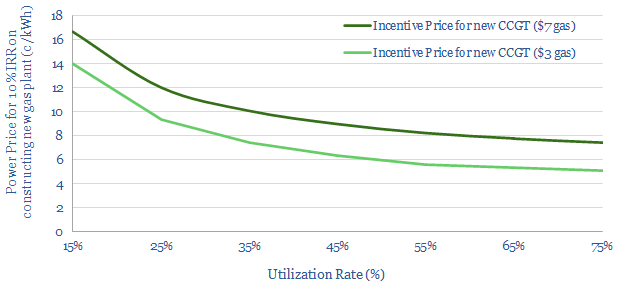

Forest residues can also be gathered and turned into biochar, a miracle material with uses in agriculture. Our models yield 20% IRRs without any policy support, at biochar prices of $600/ton, while allowing producers to pay $40/ton for biomass feedstocks (including transportation). An advantage is that biochar can be made from offcuts and other forest debris.

We are also growing more constructive on biomass power, in small quantities, where the burned material comes unequivocally from forest debris, which might otherwise exacerbate the risk of fires. But it is still debatable. The chart below shows how CO2 credentials vary: the left-hand of each range assumes all biomass fuel would otherwise have decomposed, while the right-hand assumes all forest carbon would otherwise have remained standing.

Sources

Bowyer, J., Bratkovich, S., Frank, M., Fernholz, K., Howe, J. & Stai, S. (2011). Managing Forests for Carbon Mitigation.

Fiedler, C. E., Keegan, C. E., Woodall, C. W. & Morgan, T. A. (2004). A Strategic Assessment of Crown Fire Hazard in Montana: Potential Effectiveness and Costs of Hazard Reduction Treatments. United States Department of Agriculture, Forest Service.

Fitzgerald, S. & Bennett, M. (2013). A Land Manager’s Guide for Creating Fire-Resistant Forests. University of Oregon, EM 9087

Loehman, R. A., Reinhardt, E. & Riley, K. L. (2014). Wildland fire emissions, carbon, and climate: Seeing the forest and the trees – A cross-scale assessment of wildfire and carbon dynamics in fire-prone, forested ecosystems. Forest Ecology and Management 317 9–19